A hidden bottleneck is growing within systems deployed on data center floors today. This issue is being driven by current and on-going widespread changes to IT system designs driven by such factors as virtualization, modifications to storage networking and the needs of Big Data systems. This requirement is the need for core network port capabilities to be increased to take advantage of 40 and 100 Gigabit Ethernet (GbE) interfaces that are now routinely available on most enterprise class network switches.

John Burdette Gage, in 1984 while an employee of Sun Microsystems, is credited with creating the phrase “the network is the computer. Now, 30 years later, the primary focus for many IT initiatives should still be “It’s all about the network”.

Bandwidth requirements for virtualized servers are one reason why serious consideration has to be given to upgrading network core infrastructure.

As an example, you can find the reference architecture for movement of virtual machines in a virtualized environment here.

A virtualized infrastructure needs plenty of network bandwidth. With 5, 10 or 20 servers on average being virtualized into one physical server, and each virtual server requiring, at a minimum, 1 GbE bandwidth, the typical physical server needs multiple physical 10 GbE interfaces.

Capabilities such as live migration due to load-balancing or other requirements can add additional network burden to a physical server, especially since these moves will normally occur during periods of peak activity.

As an example, a typical VM host with that is 4 gigabytes in size needs to be migrated from one physical host to another. Utilizing a 1 GbE link could take 20 minutes or more to migrate the server (which because of such factors as bandwidth limiting, CPU utilization and de-caching of RAM can have an adverse effect on other VM’s on the physical host, this impact could increase response times by a factor of 2 or more). Using a 10 GbE interface can reduce the period of performance degradation of all other VM’s on the same Physical host by 200 percent or more. In addition, having additional available bandwidth will greatly reduce the risk of VM host failure or reset due to network port saturation.

With the increased proliferation of 10 GbE interfaces on servers and other devices generation of blocking issues within the bandwidth of the data center environment are occurring. The ratio of 10 GbE interfaces on core network switches to 10 GbE on other devices within the data center rapidly falls below a one to one ration witch results in increased network collisions, decreased response times due to bandwidth unavailability and increased likelihood of network failure due to timeout issues.

Changes to storage area networks are also causing bandwidth issues within core networks.

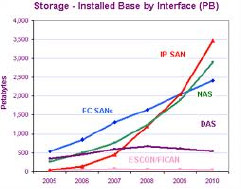

The following chart, though a little dated shows the growth of iSCSI and NAS based storage solutions when compared to fiber SAN:

Storage networks that have sufficient bandwidth to not degrade response times are also becoming an issue as conversion from Fiber to iSCSI storage area networks (SAN’s) occurs. The primary motivating factor that is driving this conversion is cost. On a port per port basis, when you compare overall bandwidth (as an example comparing the cost of a 8 Gbit Fiber port to a 10 GbE port) the cost of fiber ports, on a purely bandwidth basis, can be two to three times as expensive.

There are both pro’s and con’s to utilizing iSCSI as the primary transport mechanism for SAN’s. One of the biggest impacts to an existing environment is that without the expansion of existing core network adding the bandwidth required for a SAN architect can oversubscribe existing networks. In a majority of cases, this oversubscription will likely result in a direct to the overall network’s response times.

As our customer base has moved from a standard business day (5/9) operational/productions environment to a 7/24 one, anything that increases network response time is detrimental to growth and user satisfaction.

Use of large-scale analytical engines, such as big data, is causing over burdening of core networks

The recent growth in the number and types of analytical systems, such as big data, have become a source of competition for bandwidth and as a result for most networks a causal component for lower network performance and application response times.

A typical big data system, during the start to finish related processes of raw data to viable information cycle, with transfer large quantities of data multiple times between storage systems and servers. Data is initially collected from raw sources, transformed into queries in a database or database like formant, and then assessed from the database using specified queries. The outputs of these queries are then formatted into some type of user accessible data (such as a report) which can then be responded to. This process of moving, transforming, querying and reporting all takes bandwidth and in many cases 3, 4 times or more the bandwidth needed by average database systems today.

All of the above influencers; virtualization, iSCSI SAN’s and “big data” systems clearly requires significant bandwidth at the core enterprise level to facilitate functional operations and performance as well as meet output requirements/expectations.

The core of the problem is that the switches within most data centers are not designed to handle the recent and on-going proliferation of 10 GbE interfaces at the standard server level. Root cause analyses of current issues within many locations indicate that 10 GbE interfaces are no longer sufficient to support daily operations. Serious consideration has to be given to the use/upgrade of existing core switches to use 40 and 100 GbE ports as core interconnects. Production requirements continue to expanding in complexity, scale, and bandwidth. Without upgrading the ports within a datacenter’s core network to these speeds application will either experience or are experiencing operational impact/performance degradation. Here is a whitepaper where you can find additional information on the recommendations of a large network vendor recommends dealing with bandwidth backbone needs.